Data¶

Data is the raw material for all experiments. All your data are scoped to a project and you can access them from the « Data » section on the collapsing sidebar

The datas section holds 5 kind of assets :

- datasets : input for train, predictions and pipelines

- Image folders : folders containing image

- Data sources : information about location ( database, table, folder) or remote dataset

- Exporters : information about remote table for exporting prediction

Datasets¶

Datasets are tabular data resulting from a flat file upload, a Datasource or a pipeline execution. They are input for training, predicting and pipeline execution

Datasets

- may be created from file upload

- may be created from data source

- may be created from pipeline output

- may be downloaded

- may be exported with exporters

- may be used as pipeline input

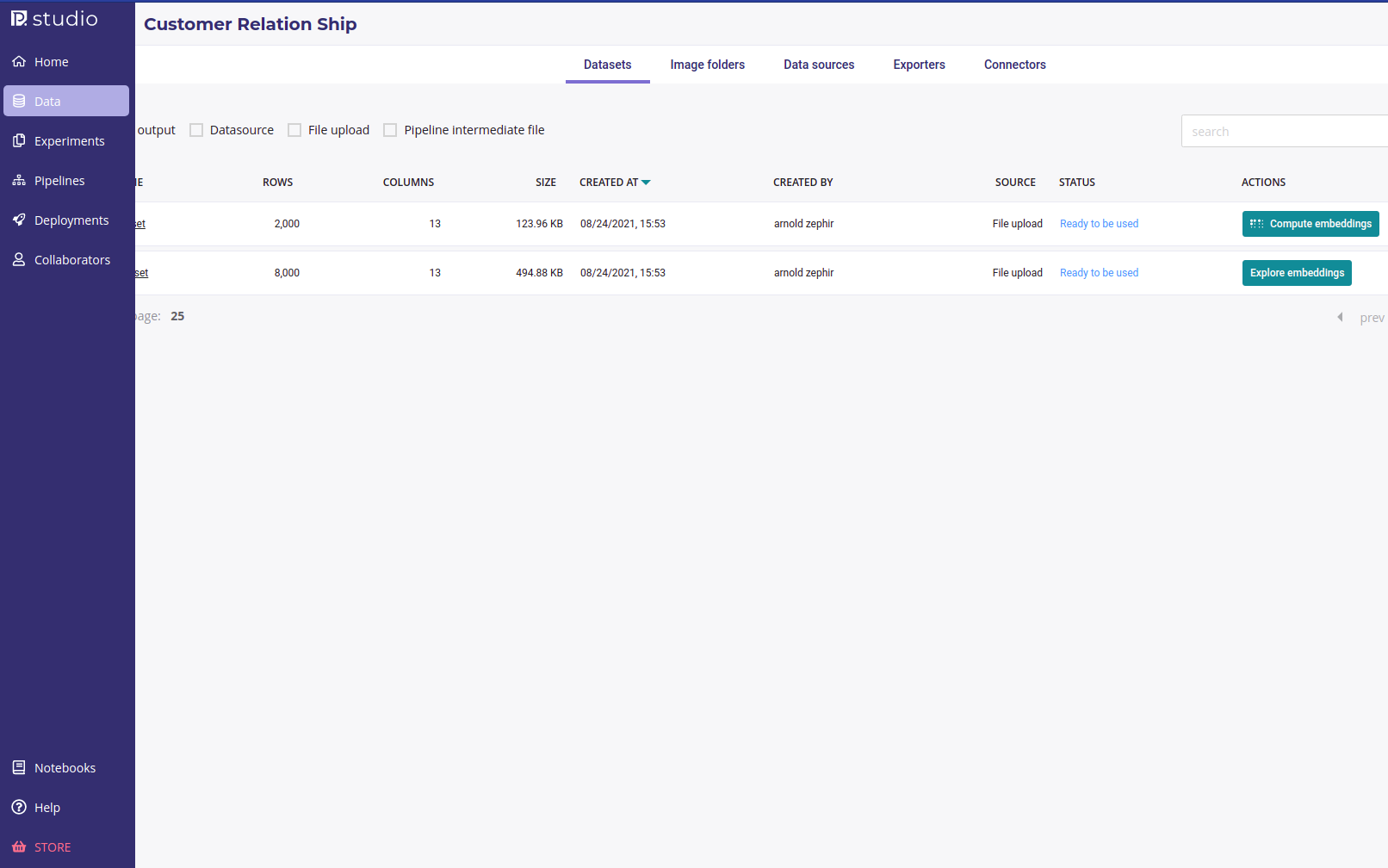

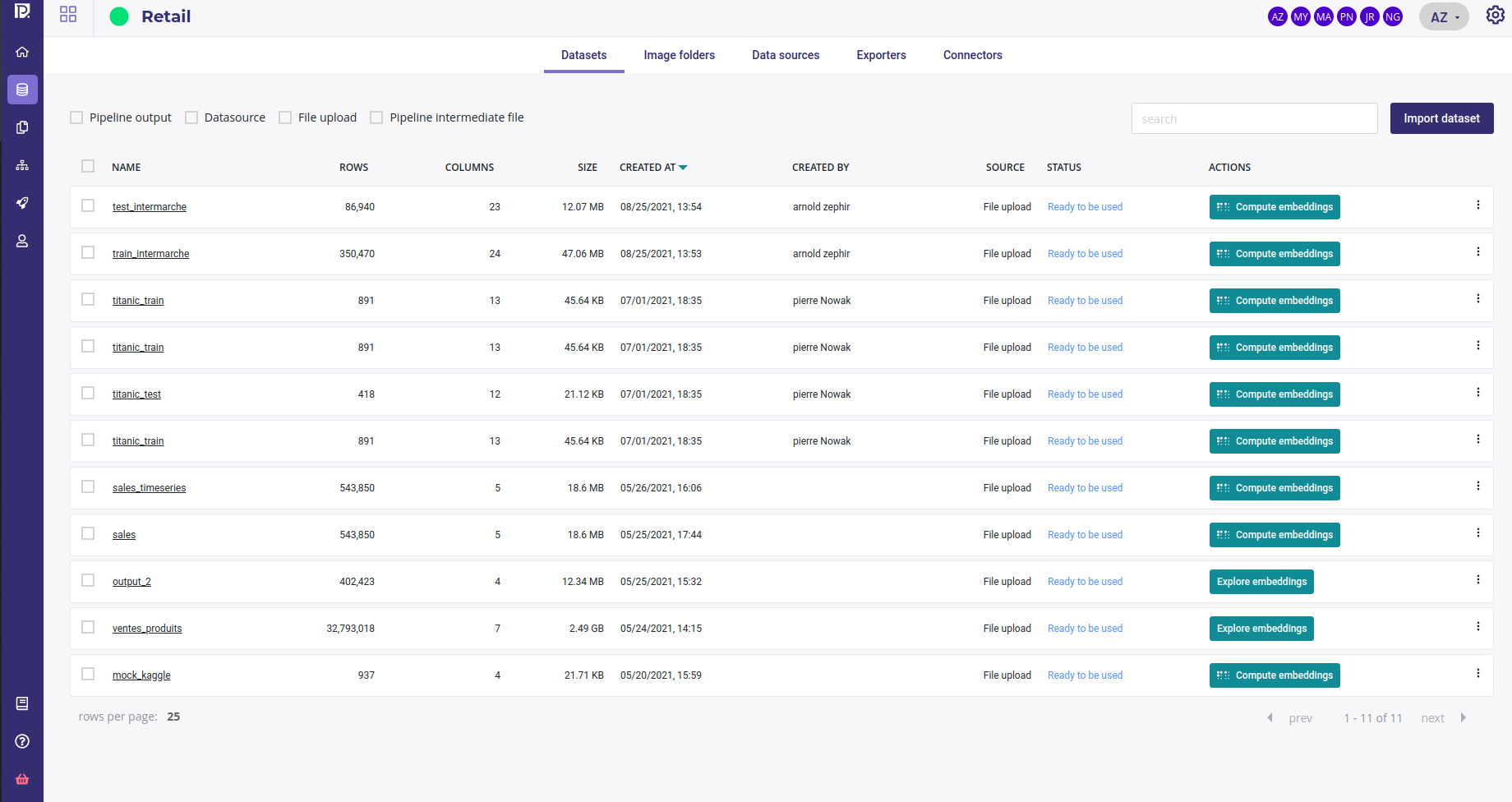

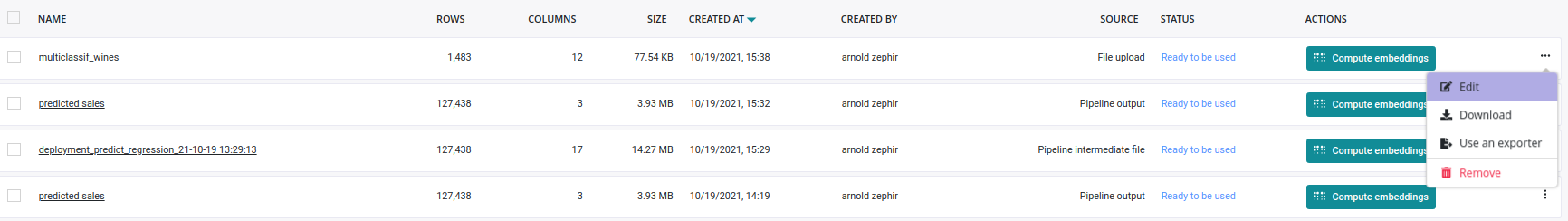

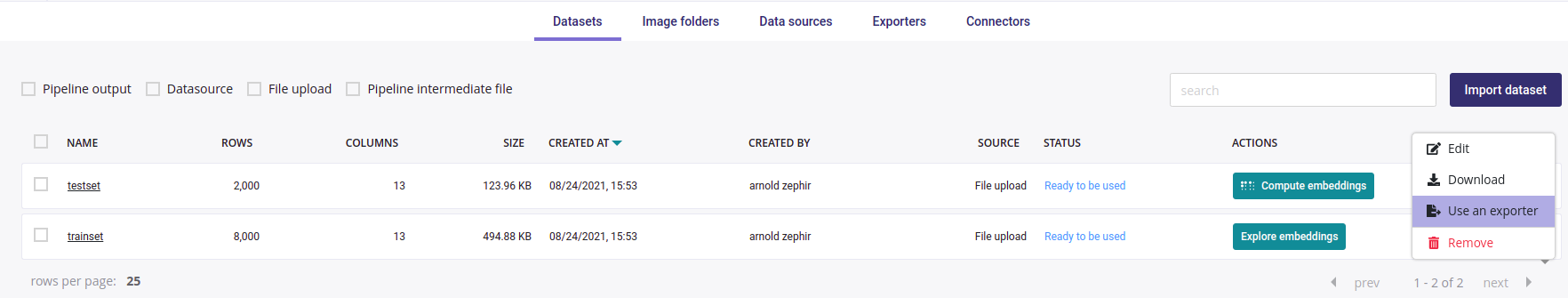

When you click on the datasets tabs, you see a list of your dataset with :

- filter checkbox for origin of the dataset ( pipeline output, Datasource, File upload, Pipeline intermediate file)

- search box filtering on the name of datasets

- name of the datasets and information about them

- status. A dataset could be unavailable a short time after its creation due to parsing.

- a button to compute embeddings or explore them if already computed ( see the guide about exploring data )

Create a new dataset¶

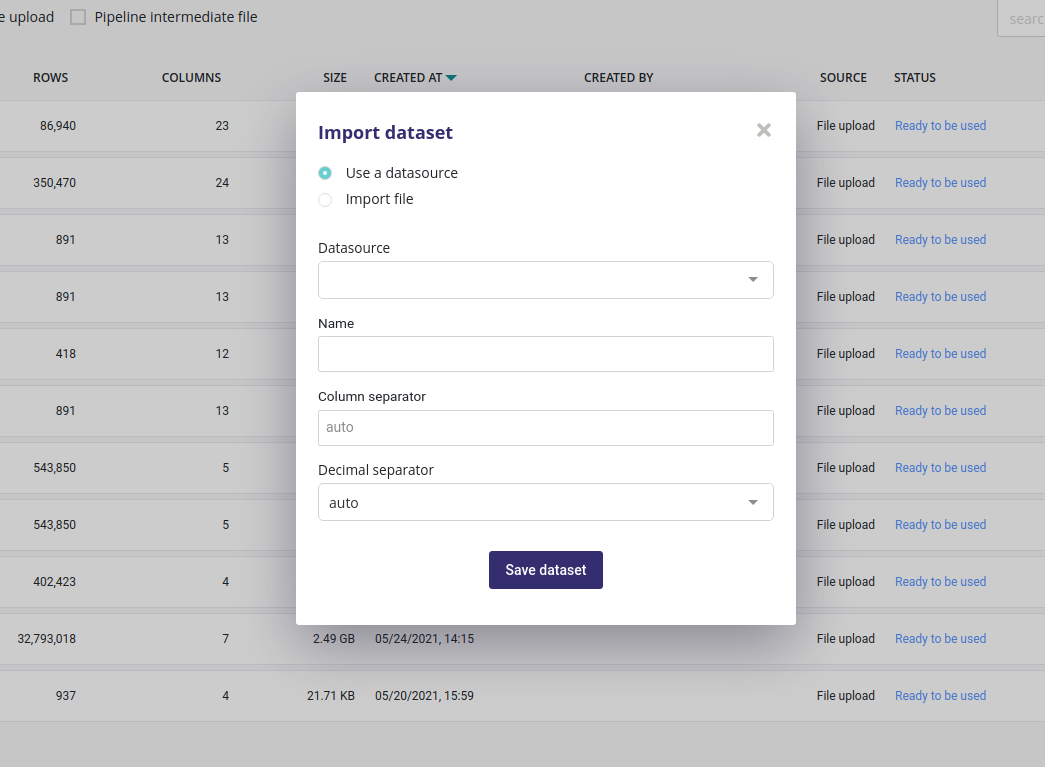

In order to create new experiments you need a dataset. They can be created by clicking on the « import dataset » button.

Dataset are always created from tabular data (database table or files ). You can import data from a previously created datasource or from a flat csv file.

For data coming from file ( upload, ftp, bucket, S3,…) you could input the columns and decimals separator but the auto detect algorithm will work in most of case.

When you click on the « save dataset » button, the dataset will immediately be displayed in your list of dataset but won’t be available for a few seconds. Once a dataset is ready, its status will change to « Ready to be used » and you can then compute embedding and use it from training and predicting.

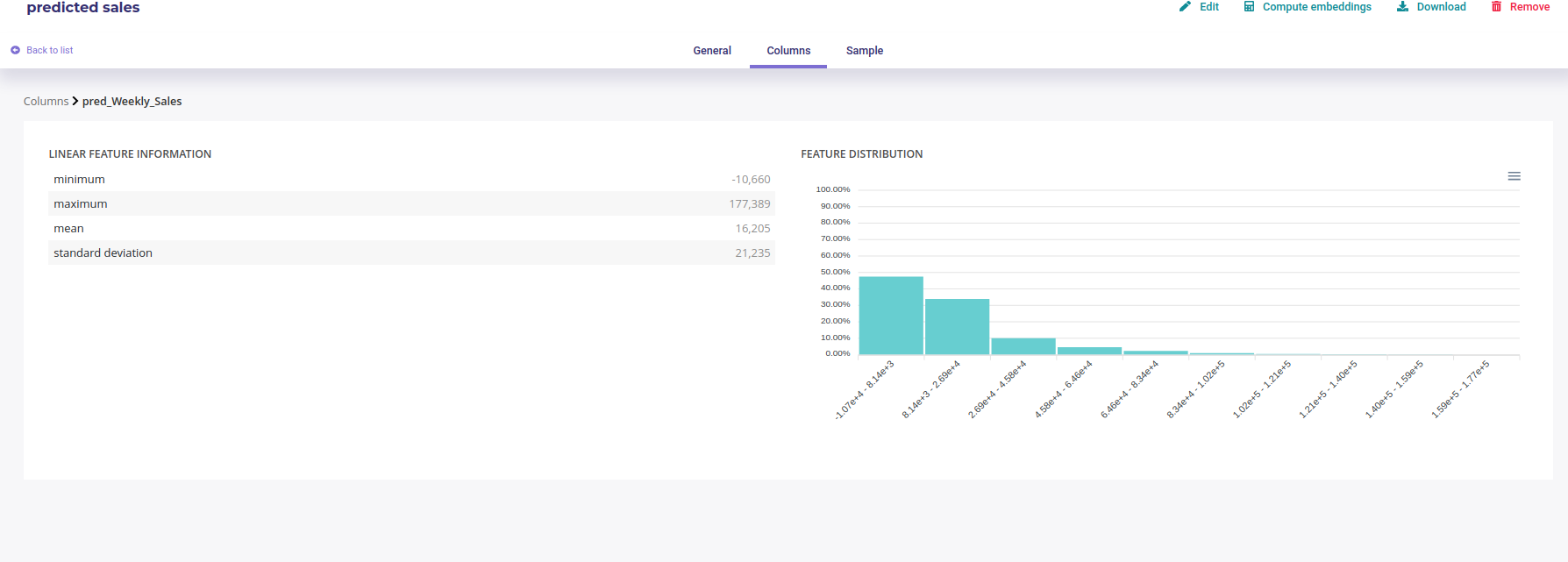

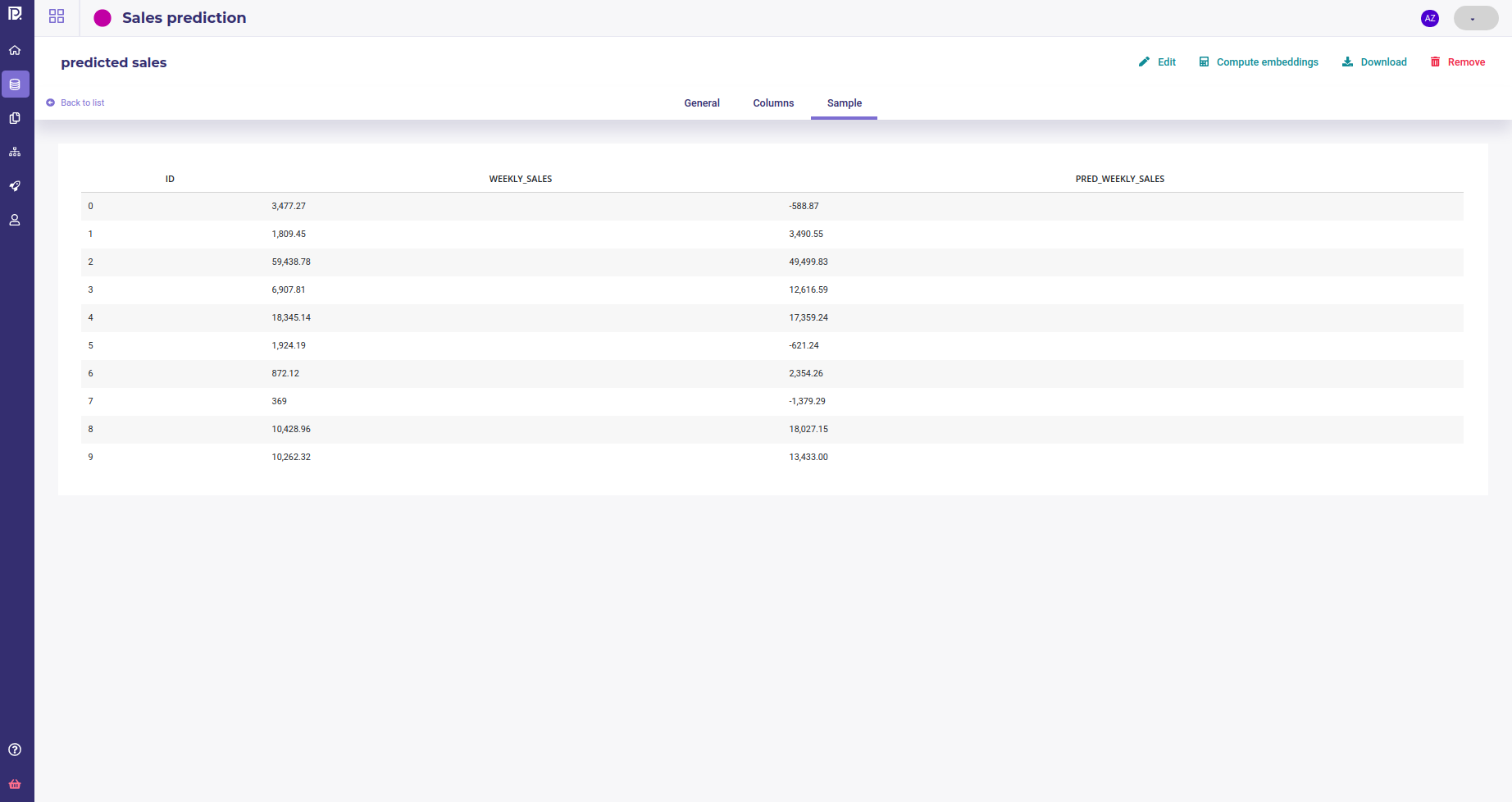

Analyse dataset¶

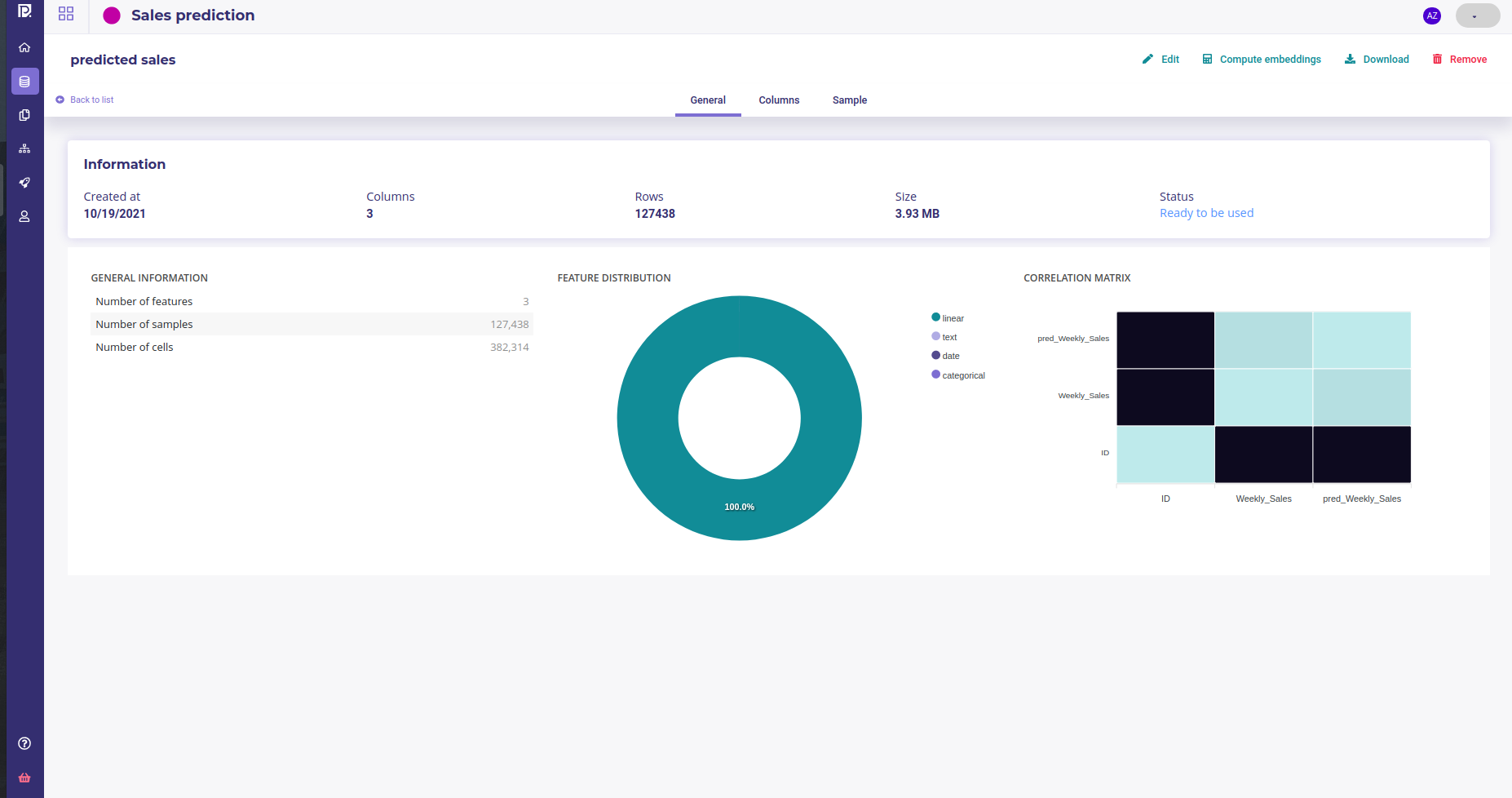

Once a dataset is ready, you got access to a dedicated page with detail about your dataset

- General Information : Dataset summary, feature distribution and correlation matrix of its features

- Columns : Information about its columns. You can click on a column to get more information about it, its distribution for example

- Sample : a very short sample of the dataset

Edit and delete¶

You can edit name of a dataset, download it or remove it of your storage either in the top nav menu of the dataset details page or from the dot menu in the list of your dataset.

When you remove a dataset, local data are completely removed. Data source data are left untouched.

Compute embeddings¶

See the : Complete Guide for exploring data

Image folders¶

Image folders are storage for your image. It is source material for image experiments (classification, object detector, …). For Images experiments you need an image folder.

Image folders

- may be creating from file upload

- can not use datasource or connectors

- can be downloaded

- can not be exported

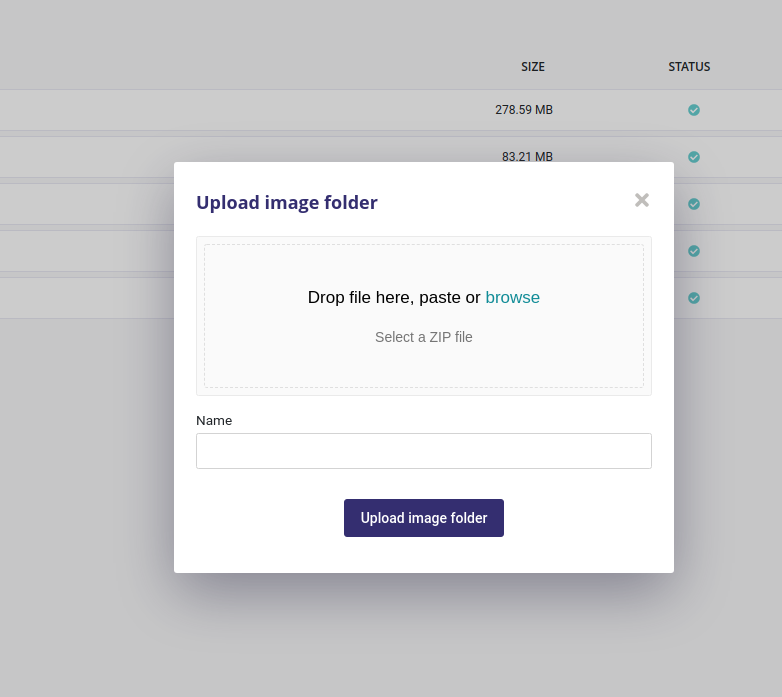

Create a new image folder¶

When clicking on « Upload Image Folder » button, you can upload a zip file either from drag and dropping it or from selecting it from your local file browser. The zip file must contain only image but they can be organized into folders.

After having given a name, just click on « upload image folder » and wait. Your images will be available for experiments in a few seconds.

Edit and remove¶

You can edit the name of of your folder from the list of image folder, using the three-dots menu on the right. Removing the image folder from your storage is available from this menu too.

Connectors¶

Connectors are used to hold credentials used to access external databases or filesystems. You need to create a connector first to use Datasources and Exporters.

connectors

- may be used for creating data source

- may be used for creating exporter

In the Prevision.io platform you can set up connectors in order to connect the application directly to your data sources and generate datasets.

The general logic to import data in Prevision.io is the following:

- Connectors hold credentials & connection information (url, etc.)

- Datasources define how the data will be extracted (SQL query, file path)

- Datasets are used to create experiments and analyses

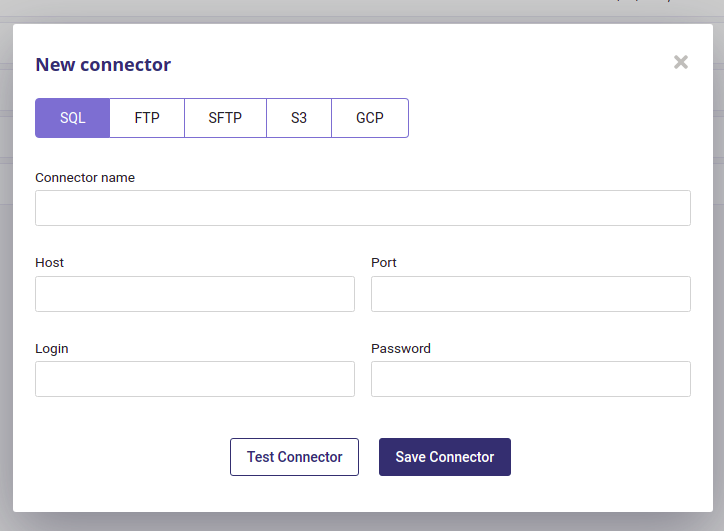

Several connector types are available :

- SQL databases

- FTP server

- SFTP server

- Amazon S3 datastore

- GCP

Create Connector¶

By clicking on the “new connector” button, you will be able to create and configure a new connector. You will need to provide information depending on connector’s type in order for the platform to be able to connect to your database/file server.

Note

TIPS : you can test your connector when configured by clicking the “test connector” button.

Most of the connector require :

- an host

- a port

- a username

- a password

but S3 Connectors and and Google Cloud Platform require special information provided in your AWS and GCP account.

Once connectors are added, you will find under the new connector configuration area the list of all your connectors. You can, by clicking on the action button :

- test the connector

- edit the connector

- delete the connector

Once at least one connector is well configured, you will be able to use the data sources menu in order to create CSV from your database or file server.

Data sources¶

data sources

- need a connector

- may be used input of a pipeline

- may be used to import dataset

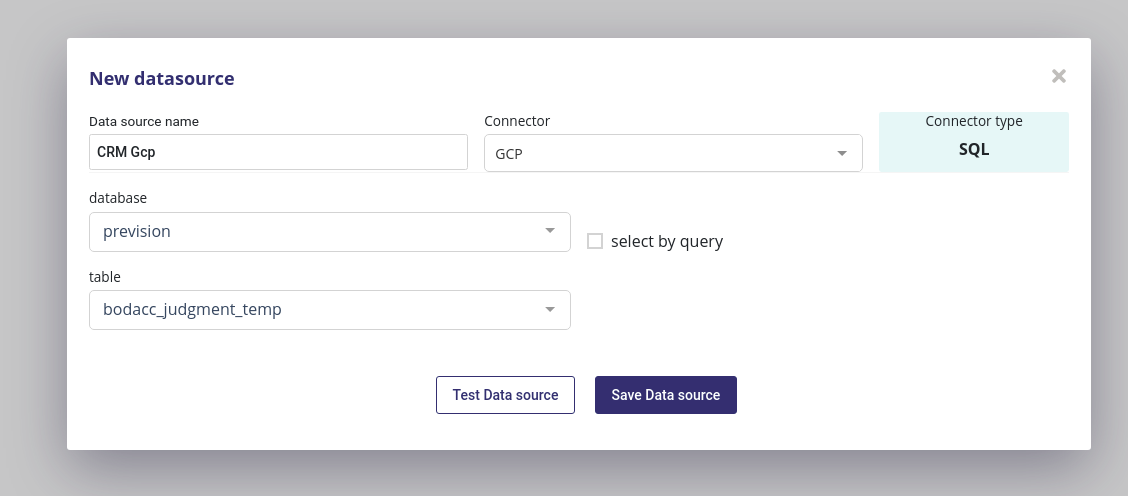

Datasources represent « dynamic » datasets, whose data can be hosted on an external database or filesystem. Using a pre-defined connector, you can specify a query, table name or path to extract the data.

A datasource is created from a connector thus you always need to select one when creating a new datasource. Depending on the type of connector, you then need to input :

- a db name and table name for SQL Databases

- a path to a file, starting from the root of your server,for Storage-like connector ( FTP, SFTP, Amazon S3 and GCP )

You can test your datasource then save it for later use

Exporters¶

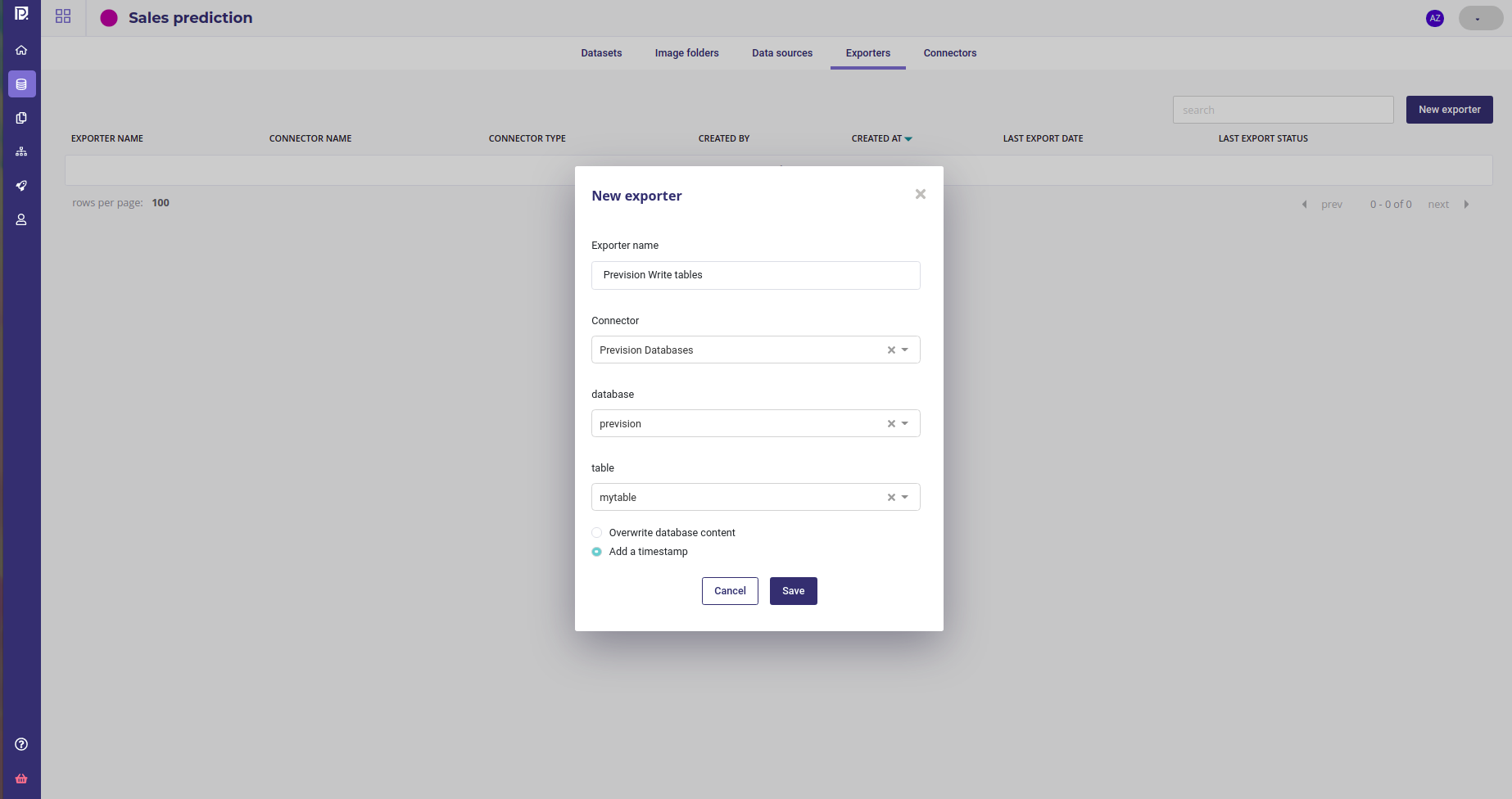

In the same way that Datasources are used to import data into Prevision.io, Exporters are used when you write the data generated in the platform to an external database or filesystem. They also require a connector, and have similar configuration options.

Exporters

- need a connector

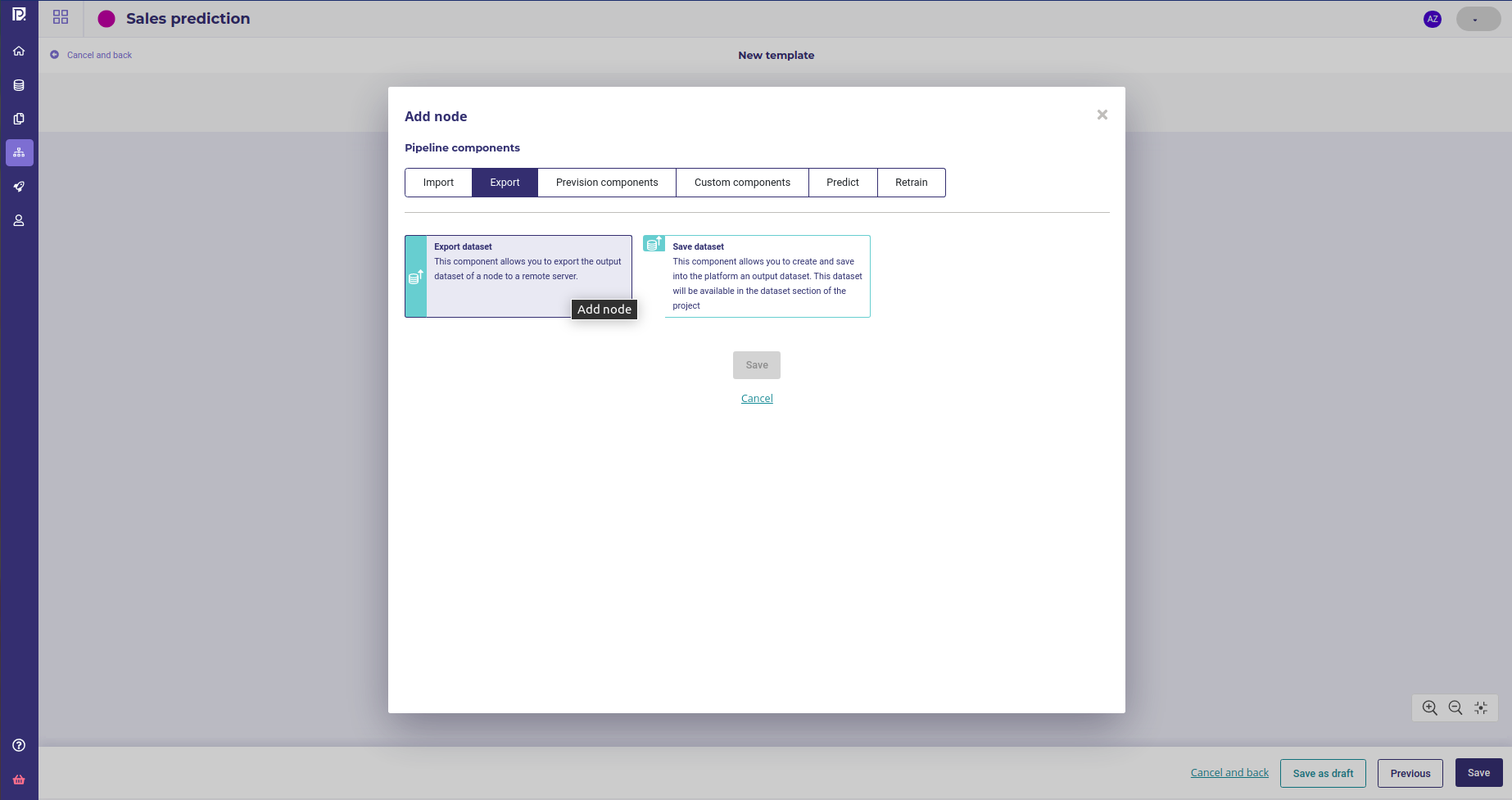

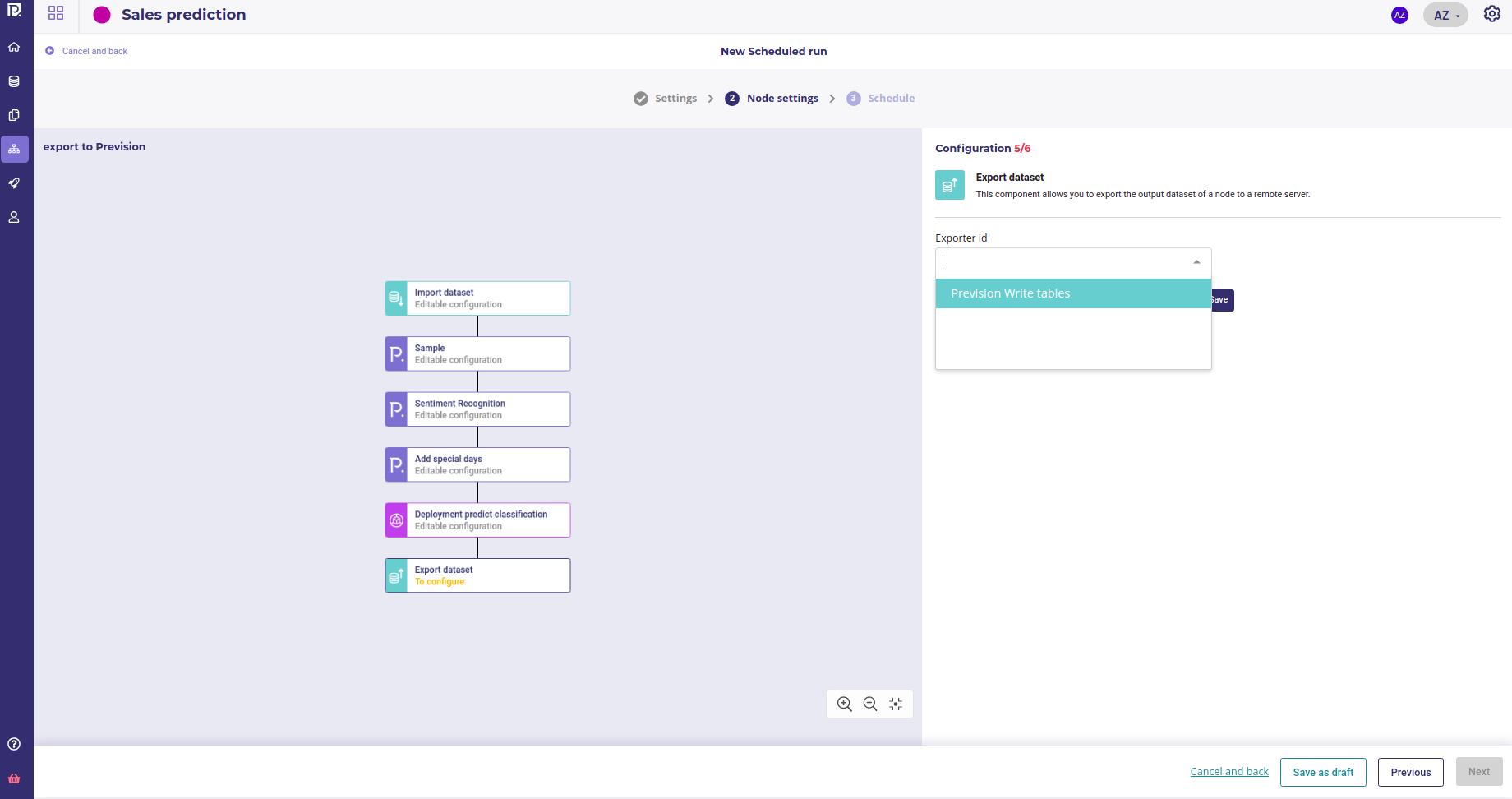

- may be use as output of a pipeline

- may be used to export dataset

When clicking on the new exporter button inside the Exporters tabs, you will be prompted to enter a name and select a previously created Connector :

When a connector is selected, depending on its type, you need to fill up more information about export destination. For example for an sql connector, you have to enter a database name and a table name.

You then have two options :

- overwritting the table each time you export data using this exporter

- create a new table with a timestamp in the name each time you export data using this exporter.

Once you clicked on the « save » button, your exporter will be available to pipelines as a terminal node, to push dataset or predictions into external database.

Typical examples for exporters are :

- delivering predictions to external system

- delivering transformed dataset to external system

A typical pipeline : import datas, make transformations, make a predicions and export result to database

Note that once you have an exporter, you can save any of your dataset to the exporter target from the datasets list by using the 3-dots menu